Open source software is to be trusted. Or at least that's what we have been told. And up to some years ago, this has been the truth, as any programmer could just check the code and decide if it might steal data or not.

But it is 2024 now, and open source software is often of monstrous complexity. Projects now comprise of millions of lines of code, especially if we take into account the underlying libraries, and dozens of modules that are downloaded during building or installation. Which means it is easier for companies to hide backdoors or otherwise malicious code even in open source projects.

The backdoors could come as innocent updates, or e.g. as a software required for the installation of your favorite window manager, or as microcode needed for some driver, or just heavily obfuscated in the open source repositories right in front of our eyes.

It shouldn't sound like a conspiracy theory that some elaborate exploits are in open source operating systems or browsers, and take some time until security researchers/geeks find them and treat them as "bugs". After all, you wouldn't expect the NSA pay an open source developer to add a "send_data()" function that opens a TCP socket to a hard-coded address.

It could be that some of those "bugs" found every now and then in modern operating systems, browsers, or underlying libraries, allowing for privilege escalations or data leakage, are actually put there deliberately as tough-to-exploit backdoors. There are known failed attempts. of the FBI to plant backdoors in open source projects, and you can bet this has been done successfully without anyone knowing, or with everyone treating the backdoor as a bug. None of this is new. This is just a friendly reminder: open source software may not be 100% trustworthy.

This is by no means equivalent to "closed source software is trustworthy". Indeed, closed source software is way more likely to spy on you with even less elaborate techniques. The point is that with all this complexity in modern projects, you can't really rest assured that no open source repo contains malicious code because "programmers would notice". Programmers can need months to notice, let alone make the bug/backdoor known and fix it.

But software isn't the only source of concern. Hardware is too. Hardware is no longer the dumb and passive machinery it once was. Modern day circuits are advanced enough to host whole computers that run along with yours.

The Intel Management Engine is a tangible example, and we can only begin to imagine how much and elaborately it spies on everything we do. Seriously, why would it need a whole kernel like Minix for the general purposes it supposedly serves? Why not publish the internals if it is that innocent? And even if the intel ME spying on everything is far fetched (this would require the intel ME to directly control all CPU operations and carry out calculations on them), it still can spy on "some" of our activity. It shouldn't be impossible for the Intel ME to log your network card activity or your keystrokes.

Even if you want to be optimistic about open source software, there isn't much open source firmware -at least not for full-blown motherboards, CPUs, and GPUs with modern capabilities. Please enlighten me if I am wrong. To my knowledge, all advanced firmware is written by corporations and it notoriously tough to reverse engineer. Once the spying is carried out on a firmware level, the privacy war is lost forever.

It seems that the only way to protect your privacy is to create your computer from scratch, crafting both the hardware with VHDL/Verilog, and the software running on it, along with compilers, assemblers, linkers, a GUI, a network stack, etc. Good luck with that. Then you "only" have to be worried about hackers and online device fingerprint -which won't be that hard if your machine is so unique in all aspects.

I don't like being pessimistic, but perhaps we ought to accept that we, privacy supporters, are about to lose the war, and perhaps already have lost it. And even if we haven't yet, it is a matter of time until all the spying is done by the hardware.

Should we run out of hope? The answer could be negative. There is still the alternative of voting for governments that will force the corporations not spy on us, but both enormous fines, and more importantly, but actually imprisoning key persons of the corporations if caught to spy on us. But on the other hand, most spying is done on behalf of some government.

--------------------------------------

ADDENDUM:

(This is more about products than about software)

What everyone who cares about security *needs* to take into account, is that once we accept that both software and hardware can hide backdoors, the first products we should be afraid of are exactly the ones who claim to protect our privacy.

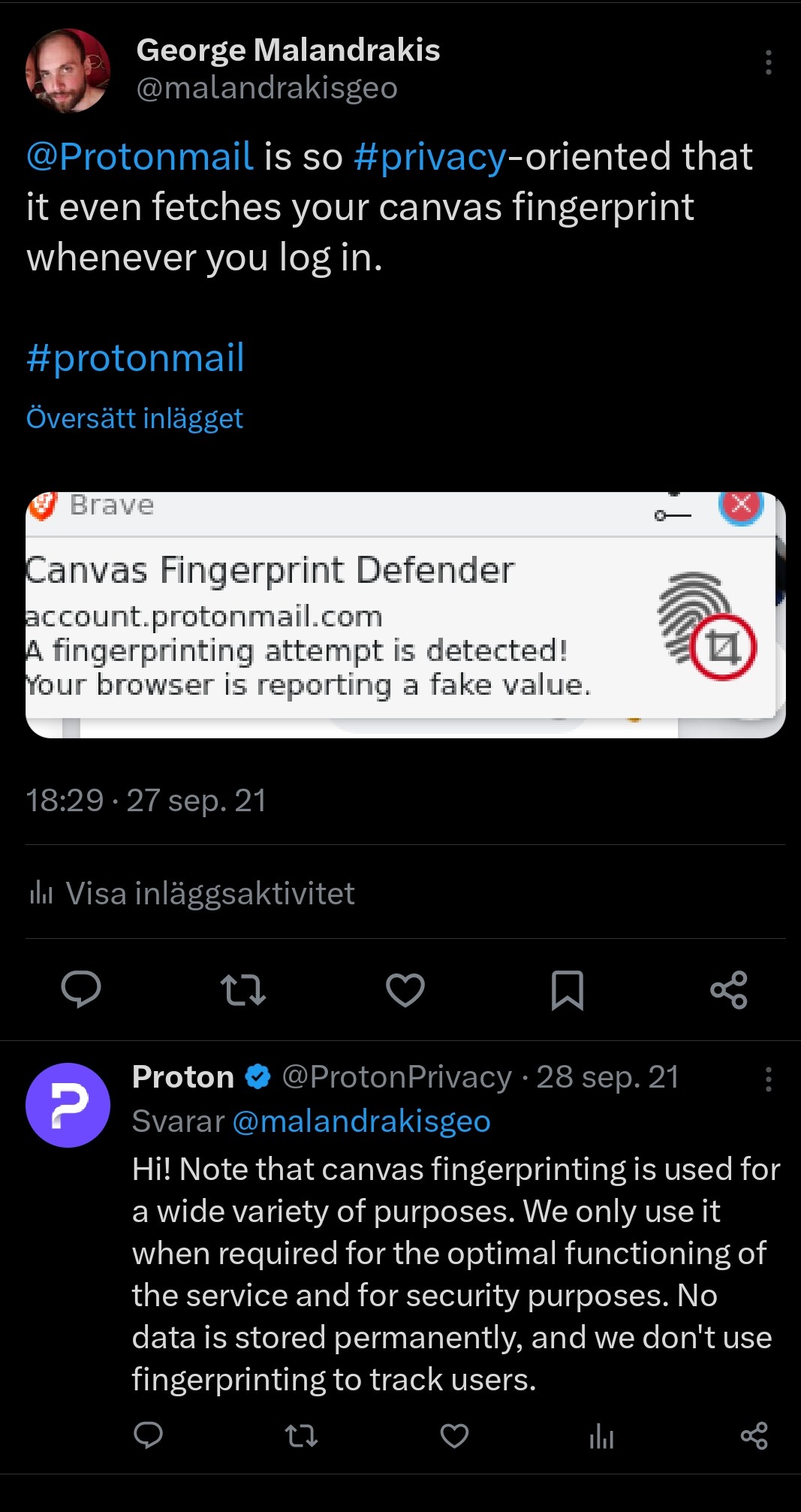

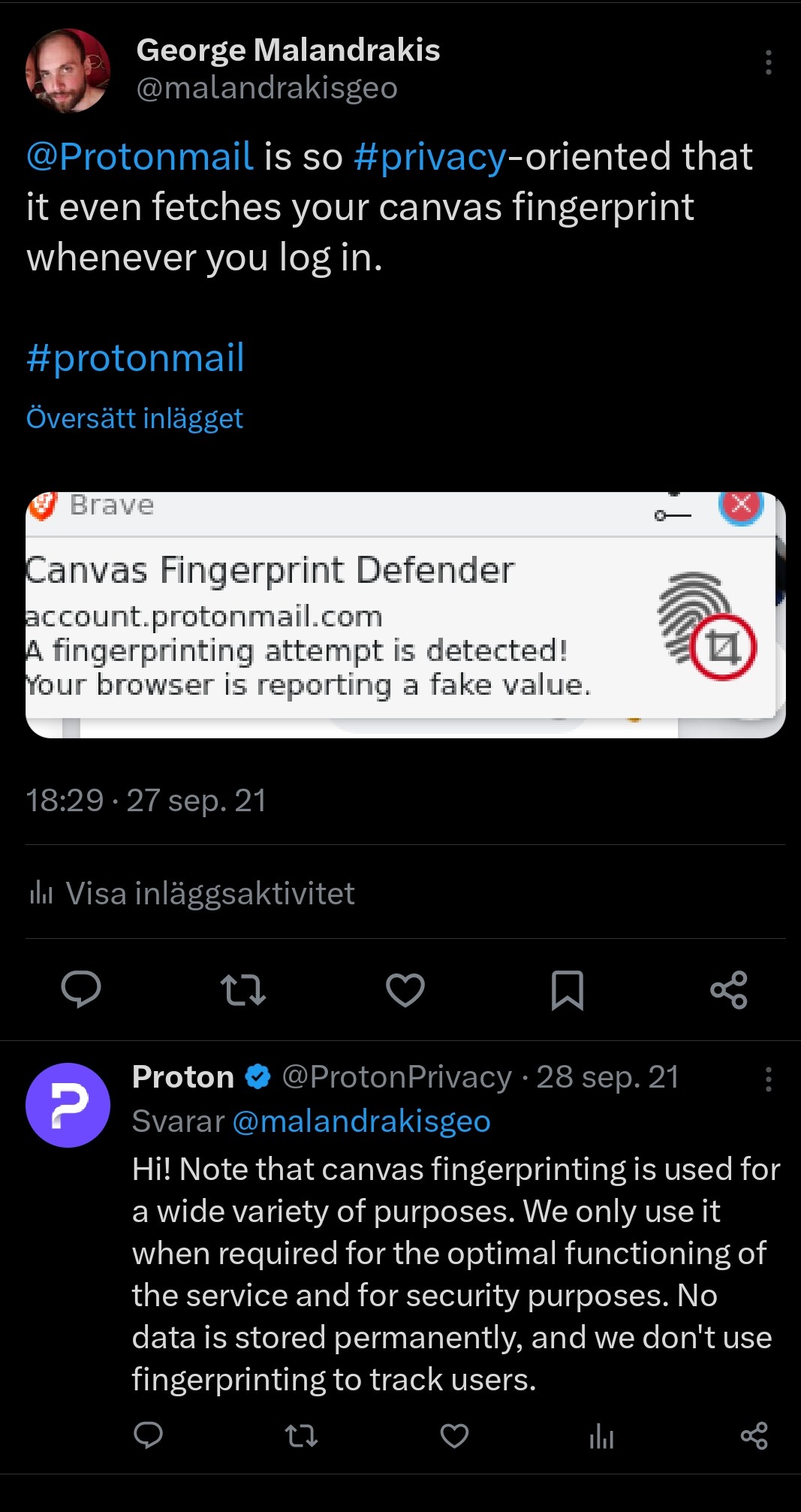

I realized that Protonmail took a fingerprint of my browser whenever I tried to log in. I posted it on Twitter, but it went completely unnoticed, even though Protonmail bothered to reply. I would bet that if a developer found a bug-door in open source software, unless the bug-door was too importand and the developer too famous, it would most likely go equally unnoticed.

After that experience, I am extremely sceptical about privacy oriented products, like Whonix, Tails, and even the Tor browser. Perhaps some of you may want to think a second time before using them.

But it is 2024 now, and open source software is often of monstrous complexity. Projects now comprise of millions of lines of code, especially if we take into account the underlying libraries, and dozens of modules that are downloaded during building or installation. Which means it is easier for companies to hide backdoors or otherwise malicious code even in open source projects.

The backdoors could come as innocent updates, or e.g. as a software required for the installation of your favorite window manager, or as microcode needed for some driver, or just heavily obfuscated in the open source repositories right in front of our eyes.

It shouldn't sound like a conspiracy theory that some elaborate exploits are in open source operating systems or browsers, and take some time until security researchers/geeks find them and treat them as "bugs". After all, you wouldn't expect the NSA pay an open source developer to add a "send_data()" function that opens a TCP socket to a hard-coded address.

It could be that some of those "bugs" found every now and then in modern operating systems, browsers, or underlying libraries, allowing for privilege escalations or data leakage, are actually put there deliberately as tough-to-exploit backdoors. There are known failed attempts. of the FBI to plant backdoors in open source projects, and you can bet this has been done successfully without anyone knowing, or with everyone treating the backdoor as a bug. None of this is new. This is just a friendly reminder: open source software may not be 100% trustworthy.

This is by no means equivalent to "closed source software is trustworthy". Indeed, closed source software is way more likely to spy on you with even less elaborate techniques. The point is that with all this complexity in modern projects, you can't really rest assured that no open source repo contains malicious code because "programmers would notice". Programmers can need months to notice, let alone make the bug/backdoor known and fix it.

But software isn't the only source of concern. Hardware is too. Hardware is no longer the dumb and passive machinery it once was. Modern day circuits are advanced enough to host whole computers that run along with yours.

The Intel Management Engine is a tangible example, and we can only begin to imagine how much and elaborately it spies on everything we do. Seriously, why would it need a whole kernel like Minix for the general purposes it supposedly serves? Why not publish the internals if it is that innocent? And even if the intel ME spying on everything is far fetched (this would require the intel ME to directly control all CPU operations and carry out calculations on them), it still can spy on "some" of our activity. It shouldn't be impossible for the Intel ME to log your network card activity or your keystrokes.

Even if you want to be optimistic about open source software, there isn't much open source firmware -at least not for full-blown motherboards, CPUs, and GPUs with modern capabilities. Please enlighten me if I am wrong. To my knowledge, all advanced firmware is written by corporations and it notoriously tough to reverse engineer. Once the spying is carried out on a firmware level, the privacy war is lost forever.

It seems that the only way to protect your privacy is to create your computer from scratch, crafting both the hardware with VHDL/Verilog, and the software running on it, along with compilers, assemblers, linkers, a GUI, a network stack, etc. Good luck with that. Then you "only" have to be worried about hackers and online device fingerprint -which won't be that hard if your machine is so unique in all aspects.

I don't like being pessimistic, but perhaps we ought to accept that we, privacy supporters, are about to lose the war, and perhaps already have lost it. And even if we haven't yet, it is a matter of time until all the spying is done by the hardware.

Should we run out of hope? The answer could be negative. There is still the alternative of voting for governments that will force the corporations not spy on us, but both enormous fines, and more importantly, but actually imprisoning key persons of the corporations if caught to spy on us. But on the other hand, most spying is done on behalf of some government.

--------------------------------------

ADDENDUM:

(This is more about products than about software)

What everyone who cares about security *needs* to take into account, is that once we accept that both software and hardware can hide backdoors, the first products we should be afraid of are exactly the ones who claim to protect our privacy.

I realized that Protonmail took a fingerprint of my browser whenever I tried to log in. I posted it on Twitter, but it went completely unnoticed, even though Protonmail bothered to reply. I would bet that if a developer found a bug-door in open source software, unless the bug-door was too importand and the developer too famous, it would most likely go equally unnoticed.

After that experience, I am extremely sceptical about privacy oriented products, like Whonix, Tails, and even the Tor browser. Perhaps some of you may want to think a second time before using them.